A few weeks ago, the CEO of a mid-size company made an unexpected confession: he didn’t know much about artificial intelligence, but he had ended up purchasing corporate licenses for Claude. The reason? His employees were already using it on their own.

No approval. No oversight. No idea from IT.

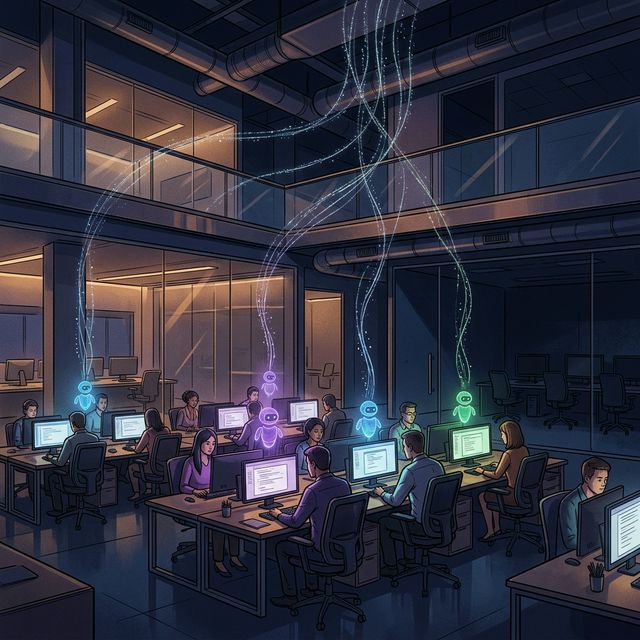

From Shadow IT to Shadow AI

If you’ve been in technology long enough, you know the concept of Shadow IT: when employees adopt tools without going through the IT department. Dropbox instead of the corporate file system. WhatsApp instead of Teams. Trello instead of the official project management tool.

Now imagine that same phenomenon — but with artificial intelligence.

Welcome to BYOAI: Bring Your Own AI.

Employees paying for their own licenses of ChatGPT, Claude, Copilot, or Gemini. Using them every day to draft reports, analyze data, prepare presentations — with corporate information. Customer data. Internal strategies. Financial figures.

All flowing to servers the company doesn’t even know exist.

The numbers that should worry you

55%+

of employees already use AI tools not approved by IT

Source: Gartner 2025 Digital Worker Experience Survey

These aren’t isolated cases. It’s a widespread pattern happening right now, in companies of every size and sector.

What’s most striking is that many of these employees act with good intentions: they want to be more productive, solve problems faster, deliver better work. The problem isn’t the intent. It’s the invisibility.

Why we call it “Agentic Leak”

At ZeroNet, we’ve coined the term Agentic Leak to describe this phenomenon. This goes far beyond someone using ChatGPT to write an email. We’re seeing:

- 🤖 Autonomous agents running in the background on employee laptops

- ⚡ Automated workflows sending corporate data to AI APIs without supervision

- 🔌 Browser extensions with embedded AI processing everything on screen

- 🧠 Personal copilots connected to email, calendars, and code repositories

It’s a continuous, automated, and in many cases, completely invisible data leak.

The dilemma: ban or channel?

Some companies have opted for a total ban. Garrigues, one of the largest law firms in Spain, does not allow the use of unauthorized AI. When you handle tax, legal, and sensitive client data, an open bar simply isn’t an option.

But banning has a cost: you lose the productivity that AI enables. And in many cases, employees simply find ways to work around the controls.

💡 The alternative is to channel it. Provide approved, secure AI tools with clear policies on what data can be processed and what cannot. But to channel, you first need to know what’s happening.

How do you detect what you can’t see?

This is where ZeroNet comes in. Our platform already monitors the energy and network behavior of IT infrastructure. And it turns out that traffic to AI APIs leaves a detectable fingerprint: connection patterns, data volumes, known endpoints.

With our new Agentic Leak capability, we can:

- ✅ Detect unauthorized use of AI tools across the corporate network

- ✅ Quantify how many employees are doing it and how often

- ✅ Alert IT and security teams about potentially dangerous data flows

- ✅ Report so leadership can make decisions based on real data

This isn’t about spying on anyone. It’s about giving the organization the visibility it needs to manage a phenomenon that is already happening — with or without its permission.

What you should do on Monday

If you’re a CEO, CTO, or CISO, ask yourself these three questions:

- Do you know how many of your employees use unapproved AI today? If the answer is “no,” you have an Agentic Leak.

- Does your IT team have visibility into traffic to AI services? If not, you’re flying blind.

- Do you have a clear AI usage policy? And more importantly: is anyone following it?

BYOAI can’t be stopped. It can be governed.

And to govern, you first need to see.

Hace unas semanas, el CEO de una empresa mediana hizo una confesión inesperada: no sabía mucho de inteligencia artificial, pero había acabado comprando licencias corporativas de Claude. ¿La razón? Sus empleados ya lo estaban usando por su cuenta.

Sin aprobación. Sin supervisión. Sin que IT tuviera ni idea.

Del Shadow IT al Shadow AI

Si llevas tiempo en tecnología, conoces el concepto de Shadow IT: cuando los empleados adoptan herramientas sin pasar por el departamento de TI. Dropbox en lugar del sistema de archivos corporativo. WhatsApp en lugar de Teams. Trello en lugar de la herramienta oficial de gestión de proyectos.

Ahora imagina ese mismo fenómeno — pero con inteligencia artificial.

Bienvenido al BYOAI: Bring Your Own AI.

Empleados pagando sus propias licencias de ChatGPT, Claude, Copilot o Gemini. Usándolos cada día para redactar informes, analizar datos, preparar presentaciones — con información corporativa. Datos de clientes. Estrategias internas. Cifras financieras.

Todo fluyendo hacia servidores que la empresa ni siquiera sabe que existen.

Las cifras que deberían preocuparte

55%+

de los empleados ya usan herramientas de IA no aprobadas por TI

Fuente: Gartner 2025 Digital Worker Experience Survey

No son casos aislados. Es un patrón generalizado que está ocurriendo ahora mismo, en empresas de todos los tamaños y sectores.

Lo más llamativo es que muchos de estos empleados actúan con buenas intenciones: quieren ser más productivos, resolver problemas más rápido, entregar mejor trabajo. El problema no es la intención. Es la invisibilidad.

Por qué lo llamamos “Agentic Leak”

En ZeroNet hemos acuñado el término Agentic Leak para describir este fenómeno con mayor precisión. Va mucho más allá de alguien usando ChatGPT para escribir un email. Estamos viendo:

- 🤖 Agentes autónomos ejecutándose en segundo plano en portátiles de empleados

- ⚡ Flujos automatizados enviando datos corporativos a APIs de IA sin supervisión

- 🔌 Extensiones de navegador con IA embebida procesando todo lo que se ve en pantalla

- 🧠 Copilotos personales conectados al email, calendario y repositorios de código

Es una fuga de datos continua, automatizada y, en muchos casos, completamente invisible.

El dilema: ¿prohibir o canalizar?

Algunas empresas han optado por la prohibición total. Garrigues, uno de los mayores despachos de abogados de España, no permite el uso de IA no autorizada. Cuando manejas datos fiscales, legales y sensibles de clientes, la barra libre simplemente no es una opción.

Pero prohibir tiene un coste: pierdes la productividad que la IA aporta. Y en muchos casos, los empleados simplemente encuentran formas de saltarse los controles.

💡 La alternativa es canalizarlo. Proporcionar herramientas de IA aprobadas y seguras con políticas claras sobre qué datos se pueden procesar y cuáles no. Pero para canalizar, primero necesitas saber qué está pasando.

¿Cómo detectas lo que no puedes ver?

Aquí es donde entra ZeroNet. Nuestra plataforma ya monitoriza el comportamiento energético y de red de la infraestructura IT. Y resulta que el tráfico hacia APIs de IA deja una huella detectable: patrones de conexión, volúmenes de datos, endpoints conocidos.

Con nuestra nueva capacidad Agentic Leak, podemos:

- ✅ Detectar el uso no autorizado de herramientas de IA en la red corporativa

- ✅ Cuantificar cuántos empleados lo hacen y con qué frecuencia

- ✅ Alertar a los equipos de IT y seguridad sobre flujos de datos potencialmente peligrosos

- ✅ Reportar para que la dirección tome decisiones basadas en datos reales

No se trata de espiar a nadie. Se trata de dar a la organización la visibilidad que necesita para gestionar un fenómeno que ya está ocurriendo — con o sin su permiso.

Qué deberías hacer el lunes

Si eres CEO, CTO o CISO, hazte estas tres preguntas:

- ¿Sabes cuántos de tus empleados usan IA no aprobada hoy? Si la respuesta es “no”, tienes un Agentic Leak.

- ¿Tiene tu equipo de IT visibilidad del tráfico hacia servicios de IA? Si no, estáis volando a ciegas.

- ¿Tenéis una política clara de uso de IA? Y más importante: ¿alguien la está cumpliendo?

El BYOAI no se puede frenar. Se puede gobernar.

Y para gobernar, primero necesitas ver.